Introduction: Edge computing vs cloud computing

Edge Computing Vs Cloud Computing:

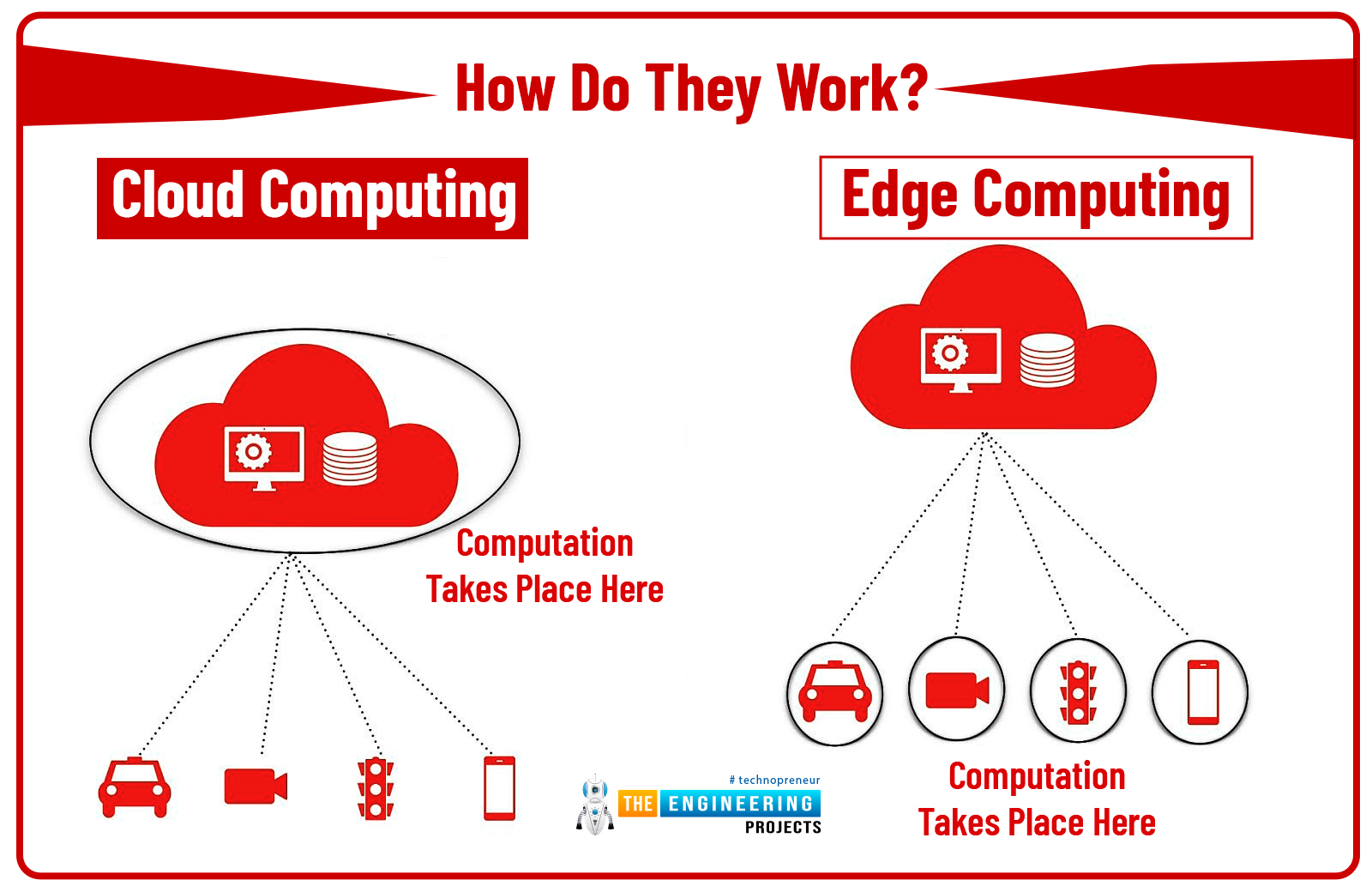

Understanding these two powerhouses begins with grasping their basic functionality. So, what is edge computing, and what makes up the cloud?

Simplified, edge computing presents a decentralized approach where data processing occurs closer to the source, i.e., the ‘edge’ of the network rather than a centralized data center. The intent? To save bandwidth and diminish latency.

Cloud computing, on the other hand, leverages massive, centralized servers for storage and processing. Businesses can tap into these cloud resources as per need, enabling scalability and robust operation.

When to Use Edge Computing?

- Edge computing shines in instances where rapid, real-time data processing is crucial.

- IoT devices are prime examples of benefiting from this. With edge computing, they can process data locally, reducing latency and ensuring quick, efficient responses.

- For instance, in an autonomous vehicle, edge computing can quickly analyze the data from numerous sensors and make instant vital decisions — a necessity where any delay can have disastrous results.

When is Cloud Computing an Optimal Choice?

- Cloud computing is the superstar when it comes to handling enormous amounts of data cost-effectively.

- Businesses large and small have embraced cloud computing for its scalability, ease of access, and cost efficiency.

- When your online application needs to store countless data, stream content, or run complex analytics, then cloud computing is your go-to technology.

Edge Computing Vs Cloud Computing: Who’s the Winner?

Conclusion

However, the most effective solution often involves leveraging both in a balanced, harmonious fusion. Hence, instead of competing in the race of edge computing vs cloud computing, it’s time we appreciate their individual strengths and their collective power to shape a more efficient, connected world.